Anchoring is a form of cognitive bias where we as users tend to place increased emphasis on the first choice we are given when forming a decision. Anchoring and Adjustment explains how we tend to take the information provided by the anchor, and use that as the benchmark for any adjustments we later make based on additional information we receive.

An everyday example: we encounter a product we wanted to purchase at a certain price or discount, then went away, and the next day we came back to that product to buy it and find the price had increased. The principle of anchoring and how we base adjustments on this initial anchoring means this should have a negative effect on the user; yesterday that product was at the lower price and now it’s higher. Outside our personal window of reality the product has just returned to its base price, but our brain is anchored by that initial perception where that product was that price. We feel that the price we should pay for that product is the initial lower one and this inflated – but actual original price – is the wrong price.

Similarly if we returned the next day and find that product cheaper than our initial experience, that will tend to influence us more positively “Ooh, bargain” our tiny minds may think, as they adjust based on the anchor of that initial higher price.

Practical test of the effects of Anchoring and Adjustment

So studies on Anchoring and Adjustment have been shown to have an effect on peoples decision making; especially at point of purchase. However I was curious & I’m the sort of guy who likes to test things out on groups of unwitting subjects for my own pleasure. So I fell back to my old trick of group emailing everyone at my work with a test…

Hi there *name*. Mondays eh? Rubbish, right? Well, I have a quick 2 minute test like thing for you to engage in and fully pass some time. Bonus: when your line manager walks past you can proudly give them the thumbs up, tell them you’re working hard, before flicking back onto that tab on Twitter. Get ready….

Scenario

So you’ve just got yourself a lovely new pet, a dog (or maybe a cat if you’re one of those dog-hating types….)

As a caring, diligent, law-abiding pet owner, you’ve just been to the vets to get the beast microchipped in case someone finds it just wandering the street unaccompanied.

After visiting the vet, he tells you you need to go register your pets chip with the database, but it’s something you can do at home.

Cut to back at home, your home, not here, maybe here. Depends I guess. Anyways, you’re there, you’re filling out your microchipping registration form, glass of wine in hand, and after completing initial sign up details, you are presented with a screen asking you which plan do you want to use for your pet. From the options on the link below, please email *JUST ME* in response and tell me which plan you’d take, and if you can be bothered, a quick few words of explanation of why.

Recipients were provided a link in the email to a pricing comparison table for an aftercare service for microchipping of pets. What the recipients had no idea about was that I had sent two versions of the email.

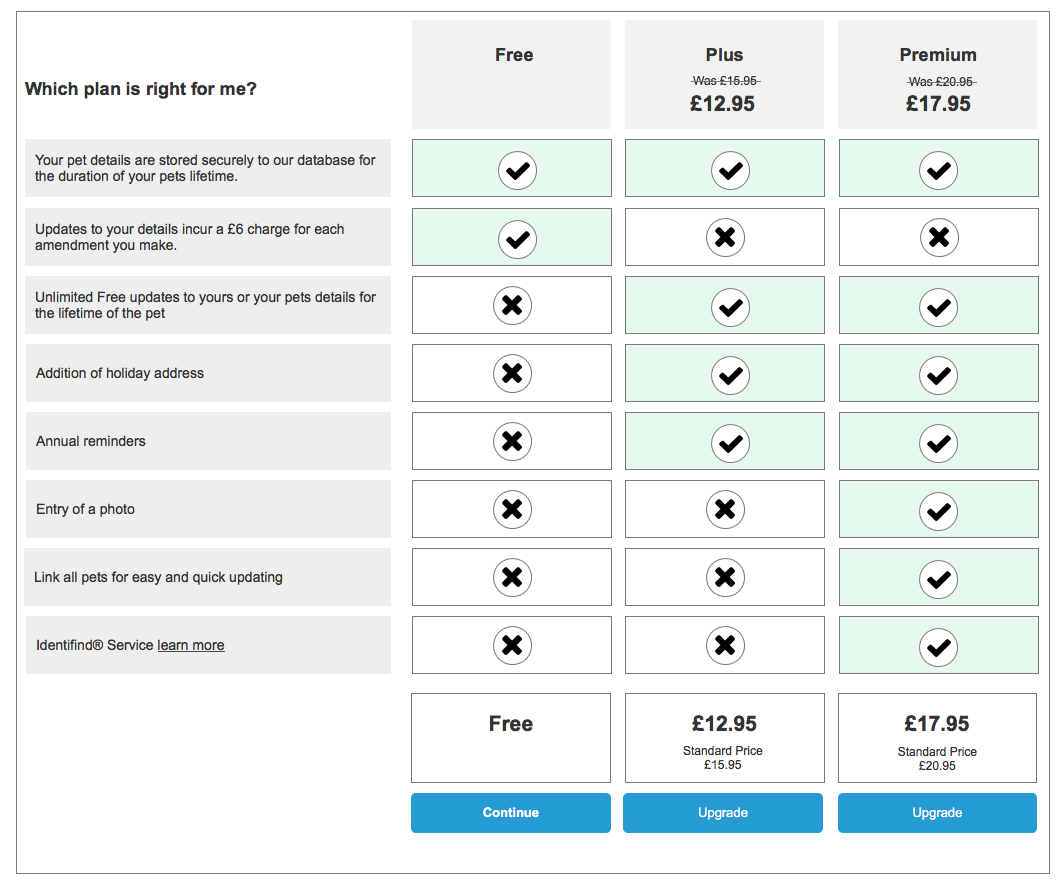

- Subjects in the A group received email one which had the comparison table leading left to right starting with the free tier, through medium tier, with premium tier on the right

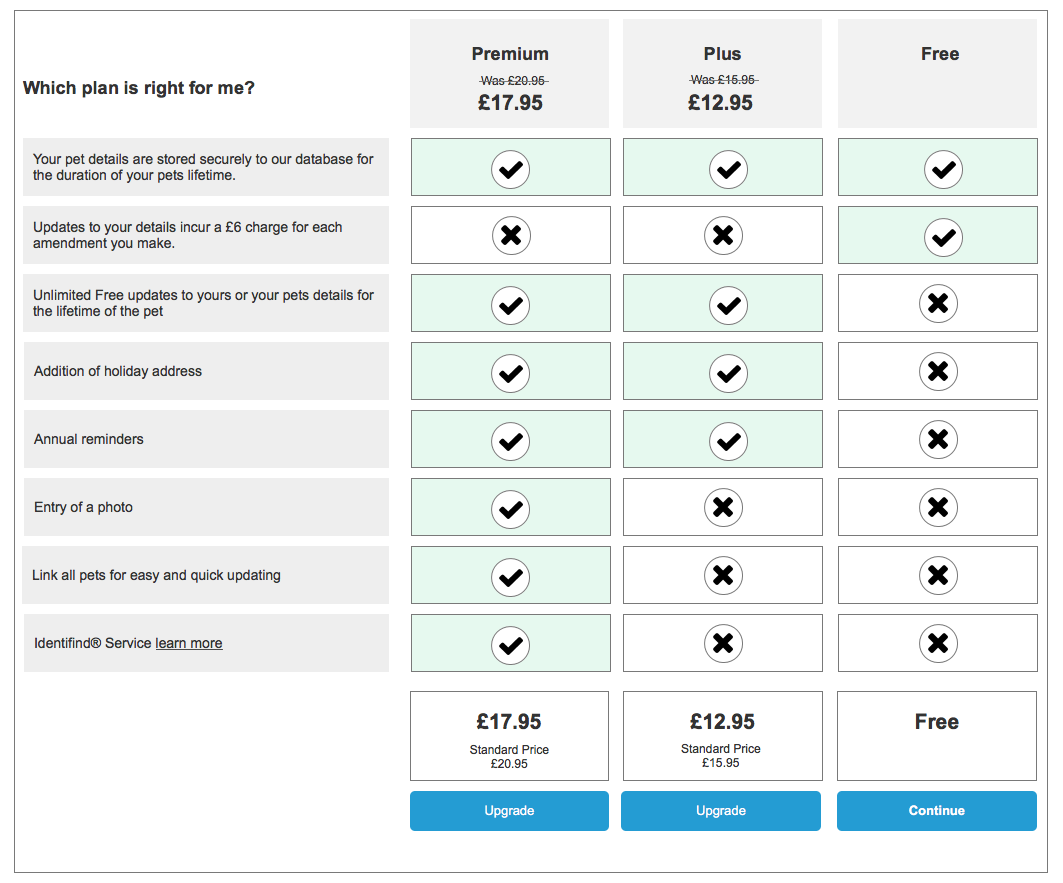

- Subjects in the B group received email two which had the comparison table leading with the premium tier leading from the left, and the free tier moved to the right

So pricing tiers increased from free up to expensive for the A subjects and prices decreased from expensive to free for the B subjects. The two tables can be seen below.

My trap laid and set, I kicked back to watch the results pour in. With absolutely no encouragement from me…

I’ve already had some fantastic responses to this, but if you have already answered this and the person next to you hasn’t can you punch them in the arm and tell them to respond to it please.

Results

That ought to do it, and it did. Results flooded in. I called it when I got to an even split of responses and received a reasonable 30-odd responses, along with the usual assortment of replies calling me a nob for some reason. So, what was the result? Well when everything was totalled up:

| FREE: | 13 of total respondents would take the free account |

| PLUS: | 7 of total respondents would go for the middle |

| PREMIUM: | 11 of total respondents would pay top whack to have their pets back. |

A relatively even split. With the free tier just taking it. But what happens when we split that back into the corresponding groups? Well:

Group A (received the comparison table with free tier leading left to right)

| FREE: | 9 group A respondents would take the free account |

| PLUS: | 3 group A respondents would go for the middle |

| PREMIUM: | 4 group A respondents would pay top whack to have their pets back. |

Group B (received the comparison table with premium tier leading left to right)

| PREMIUM: | 7 group B respondents would pay top whack to have their pets back. |

| PLUS: | 4 group B respondents would go for the middle |

| FREE: | 4 group B respondents would take the free account |

So we can see that through a purely blind test in which respondents had no idea they were being served a different table to other respondents, and thought the aim of the test was to compare the packages available. Even with this small sample size, which is totally statistically insignificant, we start to see the effects of anchoring. Starting at either free or premium tier is likely to have an unconscious effect on which tier a user is more likely to use.

Takeaways for Research

When performing this test, I found that not letting people know they were in an A/B group was a real bonus. Turns out it’s great if your test subjects have no idea about the ultimate aim of your test, and even better if they are focussed on a tangentially related goal to the task. This reduces any bias to the answer. With the actual task a couple of people responded with tangential feedback not relevant to the question, but completed the test with an answer inline with expected results for their test group. It is a natural thing for some people when presented with a test or question to try and figure out the intentions, I know I’m one of those people, and all of that extra thought introduces a degree of bias, but if that bias is formed around a red herring, we still can get an unbiased answer to the question we are asking.

A sidenote is that the task the respondent completed asked for comments on the tiers, again, not the point of the test, but this has provided a nice fat spreadsheet of comments around the products which can be fed back into the planning team who are doing some brand model work and now have a whole bunch of delicious and useful data points they weren’t expecting.

Takeaways for designs

One of the designers on my team emailed me before I released the results to ask:

The order of the services threw me a bit – does it normally start with cheap options on the left and working up to premium?

Well, before I did this test, I too thought the same. In honesty, I expected both of these tests to show that more users took the middle or Plus package as it provided the middle ground balance of features vs cost. I think that the idea that we start with free going up to expensive is a little bit of cognitive bias creeping in on the behalf of us designers. Certainly we see this pattern more often. However, it’s clear from this quick 10 minute test that the order of your comparison table may benefit from a CRO test to see if you can better take advantage of the inherent anchoring and adjustment a user may perform based on the priority of you products.

Additional References for Anchoring and Adjustment

http://ui-patterns.com/patterns/Anchoring

https://en.wikipedia.org/wiki/Anchoring

https://www.nngroup.com/articles/anchoring-principle/

Updated: After chatting with @dave_stewart on Twitter, he forwarded me this article, which cites some good examples of advanced uses of anchoring and adjustment:

9 Ways to Make Your Expensive Product Look like a Total Steal