This posts discusses the process I used on a recent app build project I was working on. My hope is by laying out this journey, it will help someone, somewhere, at some point with their planning and delivery of an app.

As this article is quite long, you may want to use the following links to jump to a pertinent section:

What’s the problem?

How I solved the problem

Interface Development

Results on Device

Next Steps

What’s the problem?

Remote controls as a general rule, suck. They tend to be overcomplicated for the actual tasks a user requires 98% of the time. A landscape of plastic with a billion little plastic houses popping out leading users to invent innovative hacks to solve the situation. See below.

Remote control “hack” via @DesignAtLarge #HCI5 #UX pic.twitter.com/lpt8mA6hwL

— Ryan Kelly (@RKellyUX) July 29, 2014

Every new interface we encounter is a new language we have to learn. Sometimes the language is limited and we can figure it out quickly, however present a user with an entire dictionary when all they needed was the word for hello and it’s obvious from the metaphor how this can be overwhelming to a user. This means using the remote each time induces a little bit of fatigue. Each task takes a little longer as your brain scans the device to find the corresponding pattern that denotes where the button you are looking for is located. The end result being you hate the remote more every time you use it. It’s an over propensity to offer everything that puts users off. AV Receivers must be the most guilty of this. I feel there is almost a snobbish to it. The core user profile catered to here must like to see themselves as audiophiles, with exquisite taste, who at a moments notice will need to tweak the most arcane of settings or control the most redudant of features. Maybe this is true, but catering to these users can be handled via software either on-screen or via an app. However, my belief is that 99% of users 99% of the time just want to change the input source (maybe we should call that navigate in this context), the volume or the power state of the device.

Unexpected side effects of oversimplification

So we know that overcomplicating the remote leads to a non-optimal user experience. Well, what about ultra simplicity? That must be perfect right? I’d agree with that mostly. Reducing the clutter makes it much easier to discern your action target. However you can go too far.

I’m a big fan of Apple but even I suffer from frustration over this thing. Not the amount of buttons here, this is perfect. The inclusion of volume and power controls for the TV again a great usability win. But the 4th Gen Apple TV remote can be an annoying remote in ways it’s predecessor with button based direction navigation simply never achieved. This remote is so symmetrical and it’s touch surface so very sensitive that every time you pick up the remote, inevitably you pick it up the wrong way round. Triggering the touch surface and sending your timeline scrubber wildly screaming its way to the conclusion of what you were watching.

I’m a big fan of Apple but even I suffer from frustration over this thing. Not the amount of buttons here, this is perfect. The inclusion of volume and power controls for the TV again a great usability win. But the 4th Gen Apple TV remote can be an annoying remote in ways it’s predecessor with button based direction navigation simply never achieved. This remote is so symmetrical and it’s touch surface so very sensitive that every time you pick up the remote, inevitably you pick it up the wrong way round. Triggering the touch surface and sending your timeline scrubber wildly screaming its way to the conclusion of what you were watching.

I’m not the only one tearing out hair clumps about this, I actually think this is pretty much the best solution to the problem:

My new killer Apple accessory: I put an asparagus band around the bottom half so we know which end to pick up: pic.twitter.com/EB6OEx6K6T

— Jared Sinclair (@jaredsinclair) December 8, 2015

A simple “bedroom mode” toggle in system settings would solve this. So that an initial click on the touch surface gets cancelled if after a specific amount of time the accelerometer in the remote detects the remote was going from a static position to moving. Apple’s palm rejection on devices like the iPad is amazing. I find it strange that they can’t send a firmware update that monitors for this initial movement and reject it where necessary. Maybe in iOS11, but I’m beginning to believe this is a hard limitation of the remote for this design. Again the Apple TV remote has an accompanying software app. The official one for the 4th generation Apple TV landed in 2016 to imitate the functionality of the then new Siri remote and it works well enough*, apart from missing the volume controls on the software remote. As of iOS11 Apple have really stepped up their game here in terms of accessing this remote on iOS devices. Apple TV remote can now be accessed from Control Centre. It’s a total usability win being able to acces controls from the lock screen in a swipe. Hopefully they expand this functionality next year and allow developer apps to have windows in there.

The worst remote: my Pioneer Remote

Now, I have a first world problem with my Pioneer AV Receiver’s remote. As alluded to above, it’s incredibly overcomplicated. The key buttons except for volume are too small, the display doesn’t light up in the dark. Worst of all it has a button that’s located near the volume – so incredibly easy to press in this button dense environment – that sort of acts like a Shift button on a keyboard. Which means that all of a sudden the direct source buttons no longer function, and you hit this a lot. It’s an awful user experience. This remote completely failed “the partner test”. The partner test of course being: if this new peice of tech can’t easily integrate seamlessly with her natural order of things, she will make me take it away and not let it be in the house anymore. Which I did not want to happen, because it really does make the sound sound really really good. The internal speakers in the TV were instantly put to shame by it. I couldn’t go back to that knowing what I had already heard. But boy, even I can’t stand that hardware remote. She definitely had a point. It’s genuinely the worst remote I’ve ever handled. It’s the Shift button that really does it.

Now, I have a first world problem with my Pioneer AV Receiver’s remote. As alluded to above, it’s incredibly overcomplicated. The key buttons except for volume are too small, the display doesn’t light up in the dark. Worst of all it has a button that’s located near the volume – so incredibly easy to press in this button dense environment – that sort of acts like a Shift button on a keyboard. Which means that all of a sudden the direct source buttons no longer function, and you hit this a lot. It’s an awful user experience. This remote completely failed “the partner test”. The partner test of course being: if this new peice of tech can’t easily integrate seamlessly with her natural order of things, she will make me take it away and not let it be in the house anymore. Which I did not want to happen, because it really does make the sound sound really really good. The internal speakers in the TV were instantly put to shame by it. I couldn’t go back to that knowing what I had already heard. But boy, even I can’t stand that hardware remote. She definitely had a point. It’s genuinely the worst remote I’ve ever handled. It’s the Shift button that really does it.

Pioneer software remote

Now, some Pioneer AV receivers are network capable. I, a tech loving type, had naturally gravitated to the promise provided by one of these devices. A network capable receiver is pretty useful as it allows services such as Spotify or the use of Airplay, which I use frequently. Along with all this network capability comes a native app, or in true industry style, an annual update of new apps all that look exactly the same with no additional UX benefit, no increased visual fidelity or no addition of features that tap into new native capabilities. The app should still be a quicker navigation than the hardware remote though? Right? Wrong. This app is awful. First your actions are gated behind a menu system providing no quick access to controls. An absolutely bizarre navigation choice means you can never find the button you need (because of course all functions are located on separate pages), when you need it. Relying on a users memory to contextually remember whether the correct panel is located an up/down/left/right swipe away.

There is another bizarre navigation choice you can enable when in advanced settings mode which converts what should be a table view into a lottery ball like ball dropping animation. It’s a fucking weird choice to have spent any focus on in development. But it is inline with a lot of other bad or weird design choices.

How I fixed my Pioneer remote problem

When I realised that Pioneer AV receiver was connected to my network via ethernet and that I had a terrible iOS app from Pioneer on my phone, I began to wonder if I could somehow find a hack that enabled me to control the receiver in one of my personal apps. I stumbled across this amazing post on how to do this via Telnet. That’s right, Telnet. You know Telnet? Well, I’d never heard of Telnet, but luckily Wikipedia informed me at the time that:

Telnet is a protocol used on the Internet or local area networks to provide a bidirectional interactive text-oriented communication facility using a virtual terminal connection.

So from this point I knew I could control my Pioneer AV receiver. I played around with the controls in terminal and it all worked quite well*. The next question was could I figure out what protocols I could harness through Swift to allow me to this in an iOS app.

Initial proof of concept work

The key to proving that I could build an app to satisfy my requirements, was making sure it was even possible to Telnet into a compatible device from an iOS device. Handily, through my extensive playtime with the Raspberry Pi I already had an app on my phone which proved the feasibility of this; Prompt by Panic, is an SSH client for your iOS device. I frequently use it for SSH’ing into the Pi. With the knowledge that networking at this level was capable and permitted by Apple on store, I started investigating the Networking API’s available to me in Xcode. The trick to this turned out to be that I needed to open a Stream, handily, this is a Foundation Class. No having to go out and grab a framework to power it.

Streamobjects provide an easy way to read and write data to and from a variety of media in a device-independent way.

Well, that sounded just dandy for my requirements and after a bit of testing, proved exactly that. In terms of connecting, it is fast and quick. Messages sent from the amp to the phone app needed some filtering as the messages as sometimes long messages would concatenate onto the next message, but nothing major. Sending commands worked great and the connection proves solid.

Initial feature development

Knowing how we used remotes I knew our most common most common actions for the amp were:

- Volume control (including muting)

- Source control

- Power control

I’m going to assume this is a common thread amongst all users, because these correspond to the only big prominent dials and buttons on the actual amplifier itself. I had done an initial sketch once before, asking my partner what her dream universal remote would be. I can’t find it right now, if I fnd it I’ll scan it.

This work didn’t look anything like the finished result and the functionality was way above what I wanted to do at the time but it did yield one great little feature that I worked in as soon as I could. My partner had said she wanted some universal remote thing that allowed her mouse control of the mac mini, control of the apple tv, amp controls. All in one. That seemed like a huge challenge, especially as Apple doesn’t have any sort of remote public API for the Apple TV. What Apple does provide is Custom URL Schemes which allow us to open apps from within apps. Using a custom url scheme when available for the apps I’d want to open proves useful, I could contextually change the source icon in the info panel to be a button to open the requisite app when required. So, Virgin, typically & uselessly don’t have one. So I can’t kick open the Virgin remote. However, I can quickly open the Apple Remote app.

Interface development

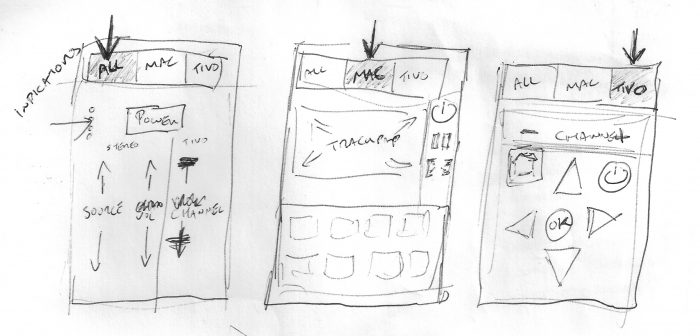

Below is the early UI development of the app. The priority of the page elements changing is the interesting story here in terms of UX. Information and power naturally seemed to want to be at the top out the way. Power is a button I want to use, but not all the time. I thought I could get a cool info panel blending in with iOS status bar, but this was visually cluttered and confusing. Power button before information also seems correct if you card-sort the elements in your brain, how can you have information before the device is on?

The next bit was one of those UI puzzles that when it clicked it made total sense. So as we established the most common usage would be volume control. Based on the idea that the most natural area for the user to reach was the centre, it makes sense that you’d prioritise those buttons by putting within that primary reach area. This area was to be a swipe-able pad to control the volume going up and down. The thing is, by going down this route, I was actually making it more difficult to reach the second most common action: the input source controls. The diagram below is liberally stolen from another article, but rebuilt so I had it as a vector. The article I’ve linked to discusses how the natural reach area diminishes as the size of the device increases making certain areas uncomfortable to reach. There is truth in this. Anecdotally, when I made the jump from the 4s to the 6 I moved all my most important apps previously on the top row down by two rows. The extra icon space gained from increase in height of the iPhone 6 became for apps too important to lose in the depths of SpringBoard, but not important that they were daily driver applications.

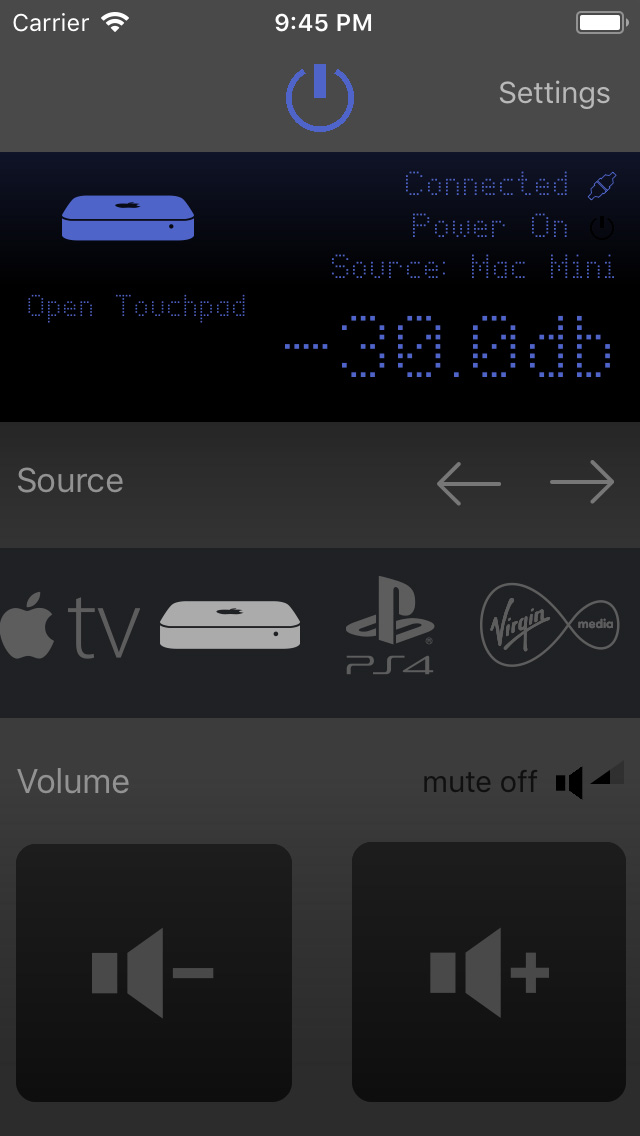

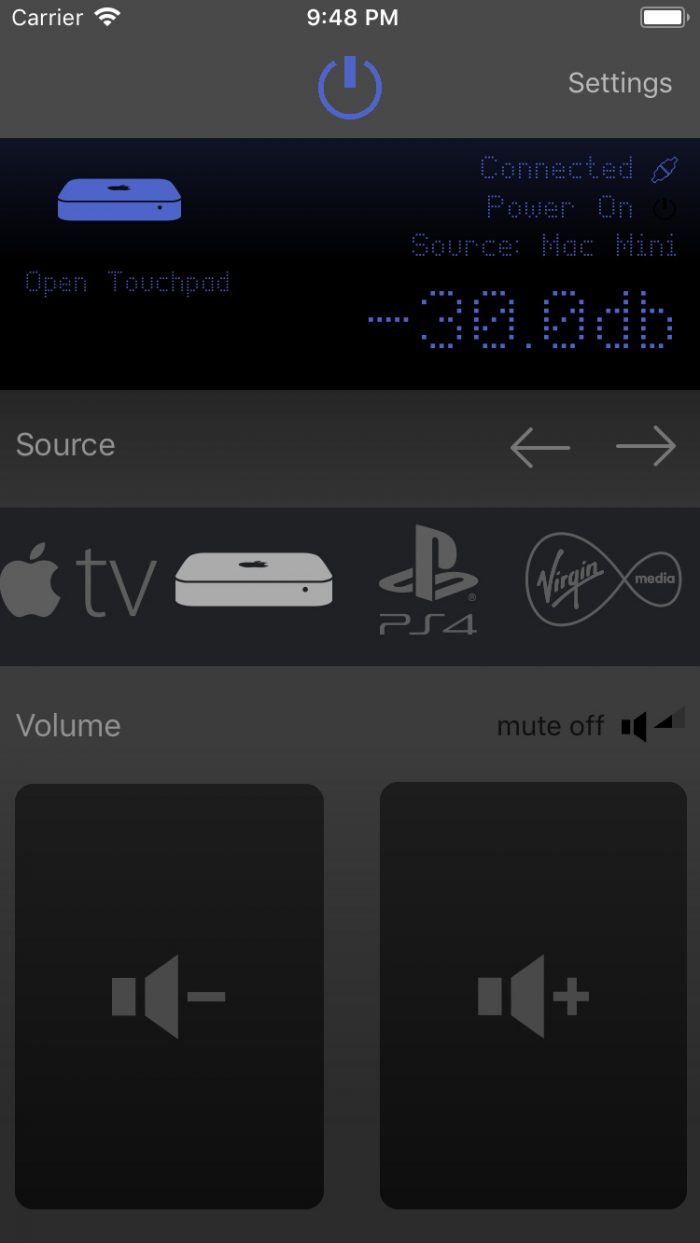

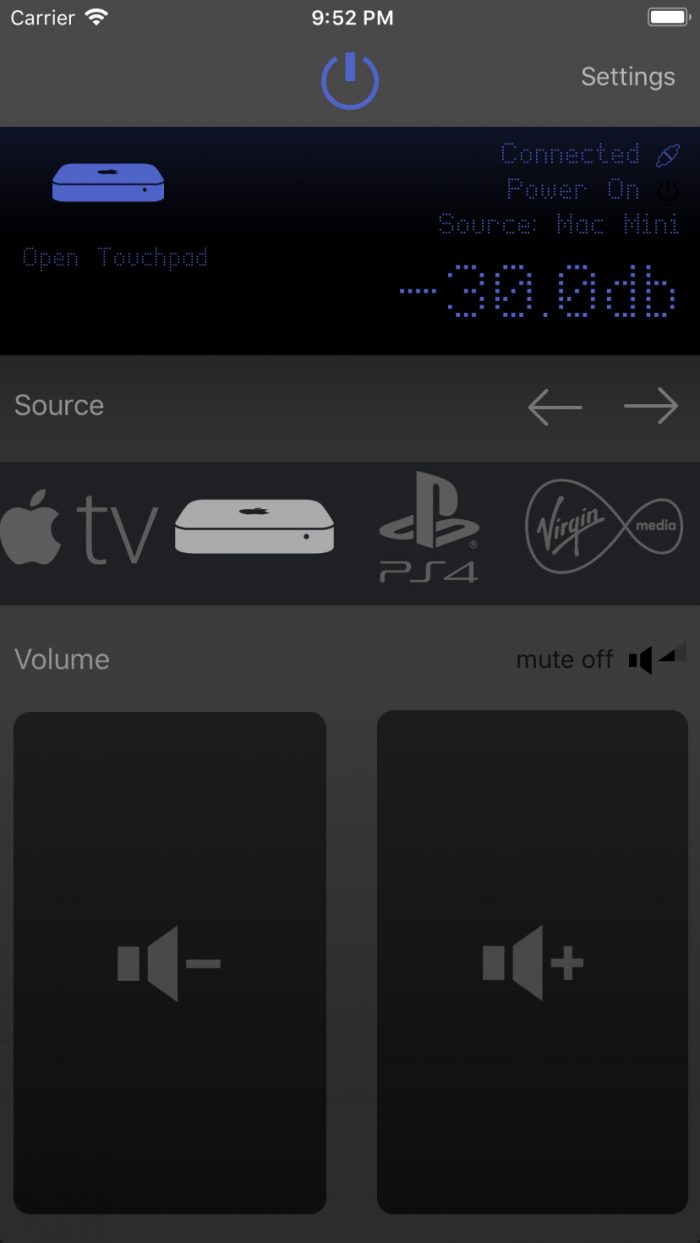

As the diagram above illustrates, there is a natural area towards the centre of the device which we have optimal reach for. The area diminishes as we go above and below that. In the instance of the power control, that being more of a stretch isn’t an issue, because it really is tertiary in terms of usage. The input sources, which individually had a smaller tap area anyway due to their size, became more difficult to reach and angle for a specific one in this low position. When I had the brain wave to ditch the sliding pad volume control and go with buttons and make them big, moving them to the bottom had no impact on usability because suddenly you have a massive tap area*. It was a really neat solution that has the bonus of working well with auto layout. On 4inch devices, the buttons are much more compact but still very reachable due to the smaller screen, as we get larger the hit area increases with the available space.

Interface skin

With the usability sorted. A quick hop into Photoshop (yes, I still use Photoshop for UI, eat me) to tidy up the developed ideas. Obviously as a designer and developer, I have the luxury of being able to mix both skillsets in development to speed things up somewhat. But I personally never care for the visual polish until I have things working and in a relatively optimised state of usability. Never the less this meant by the time I brought the interface into Photoshop a lot of ideas had taken shape and it was really just a matter of experimenting with the nascent shapes and developing them. For instance, I’d already worked out that I wanted to mimic the feel of the AV receivers remote panel, so the black background and even the pixel fonts had taken shape. It was in Photoshop that I brought in gradients, worked out how black the background needed to be. The little ideas that are just so much easier to work out in an image editor.

Above are screen grabs from Simulator for iPhone versions of the remote app after applying the tweaks I’d worked out in Photoshop. Well, saying that, after applying the Photoshop tweaks, then applying a load more tweaks on device.

Interface interaction animations

With the buttons for changing source inputs and volume, I knew I needed some animation states. This is because of the way the commands are sent (one action) to the amp. The amp processes the command (or doesn’t) and sends a message back to the app to confirm the action on the amp has taken place (second action). As the amp sometimes won’t process the command (say, something wrong with the connection) I needed a way for the user to visually note that the command has been sent, but not fully acted upon. This is where the often overlook down states on button presses comes in handy.

- When the source input is first pressed and the command is sent to the app, the downstate happens animation happens, the button shape reduces in size.

- If the user releases their finger from the button the upstate animation happens and the button snaps back to size

- When the amp sends back a message that the source has been updated, the colour state of the button changes

This gives the user a quick visual indication of where the problem might be. The app has responded to user input as denoted by the animation, but the command has not been fully completed because the source has not changed colour. Seems utterly obvious, but without this the user may wonder if the problem is with the app or with the AV Receiver, by responding to user gestures the implication is the connection.

Another good user of down state animation within the app is on the volume button. With the volume button pressed and held, a timer is triggered every 0.5 seconds to send another volume command to the AV Receiver. When released the timer is invalidated and no more commands are sent to the amp.

- To signify to the user that the button can be pressed and held a down-state animation reducing the size of the button shape occurs when the user presses the button

- The button remains in the down state whilst the user keeps their finger on it.

- To signify to the user that another instance of the volume command has been sent during this down state, a circle animation fades and scales in from the area of first touch and then fades out.

- When the button is released the up-state animation is triggered letting the user know no more actions are being sent from the button

Results on Device

Below, is a shot of a version of this hard work running on an actual device. Looking pretty swish I think.

Taking my remote to the Watch

I’ve been playing with Watch apps for a bit now, so I’m quite familiar with the API’s available there. The one major downside, in this instance, to the Watch is there is no Networking API on watchOS like there is on iOS. What this means is that we have to run commands through the iPhone and wait for the iPhone to receive a response from the amplifier before send that back to the Watch. This adds a layer of fragility to the connection that I haven’t fully gotten around.

Complications

Speaking of complications I encountered when building, I also made sure I encountered Complications with the Watch app. If nothing else but for quick launcher in most states, however in my (favoured) Complication style – Modular Large – I utilised the available space to bring in messaging about current device status. It’s pretty, it works via notifications posted from either the Watch or the Phone. One downside however is that it doesn’t update to state changes on the amplifier if the state was altered by a different input device unless the app is connected at the time.

Taking my remote to the iPad

Thanks to the wonders of auto-layout, taking my app to the iPad was relatively easy. The core UI is more of a proportional scale up than anything else. But works well. I implemented both portrait and landscape as on iPad I possibly tend to spend more time in landscape. So I considered this quite important. Another consideration for iPad is to implement split screen modes so that the remote can be easily be used alongside another remote app. I personally find this leads to a really pleasing experience on iPad; Would be cool if I could pop open custom URLs in splitview though.

Next Steps

More than any of the other apps I’m working on this holds a position as the one that I could get to AppStore the quickest and the one where there might be an audience looking for exactly this simple, yet powerful tool. To this end I just need to:

Allow user to select their devices I.P addressAllow the user to select their type of AV receiverUpdate the commands and inputs based on the users selected AV receiver- Set up testing for the user input settings

Allow the user to rename, reorder, deactivate, inputsAllow the user to change input iconsAdmin their device settings- Pass all of this user data to the Watch

- Beta test it

Once we’ve done all that, we are golden and i can being the long arduous process of getting my soul beaten down by customers on the App Store.